Research Movies 2009

Autonomous Navigation on 3.5km Sidewalk of University of Tsukuba

In order to achieve autonomous navigation in the environment assigned, the subject environment is divided into sidewalk and junction section with suitable navigating method devised respectively, which can be switched to one another during navigation. The sidewalk section is an area of which the surface can be recognized by a URG sensor and along which enables autonomous navigation. Meanwhile the junction section is an area in which the robot travels with path planning navigation using data from the URG sensor as well as a 2D map provided in advance. In this experiment, excluding the off-campus section, the navigation method is succeeded for a distance of 3.5km out of the 4km sidewalk.

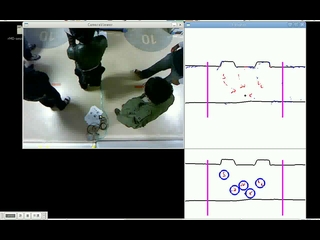

Measurement System of Human and Shapes using Multiple Mirrors and URG Sensor

A system which measures the number of people in a designated area is constructed. With just one URG sensor, and reflective lights from the mirror surfaced tape pasted on the surrounding wall, the system is built insusceptible to error of measurement due to blind spots. The left side shows the image taken from a camera located on the the ceiling. On the top right shows the data measured where '+' represents the sensor location, red represents data obtained directly from the sensor, while blue represents data obtained from the reflections of the mirror surfaced tape on the wall. The bottom right is the result after noise removal and segmentation, with the blue circle representing humans extracted from the data.

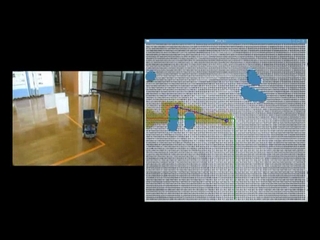

Path Planning Method for Obstacle Avoidance While Moving on A Designated Path

With a basis of Distance Transform on a two dimensional plane grid, an algorithm is constructed to make the robot move as closely as possible to the designated path by varying the weights of grids which are not on the designated path. On the left shows the robot motion with the orange line on the ground as the preset designated path. On the right shows the avoidance route, where the green line is the preset designated path, light blue represents the obstacles which are detected by the URG sensor, red is the calculated avoidance route, yellow an expanded red path on the area with no obstacles nearby, and blue represents the path which the robot should move on. The robot motion where obstacle avoidance is executed while staying closely to the designated path is achieved.

Outdoors Navigation for Patrolling around Building

The navigation path is given to the robot in advance. Then the self localization of robot is corrected by comparing the URG sensor data of the environment recorded during motion preparation stage and during run-time. (Due to the correction of self-localization, the robot halts temporarily.) In the movie, the robot patrols around the building for three rounds while avoiding obstacles such as bicycles.

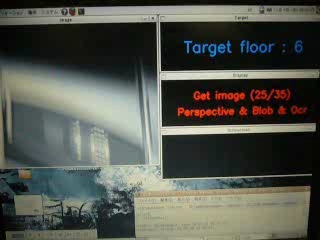

Elevator Control Panel Recognition for Mobile Robot

In order to let the mobile robot move between floors, recognition of operation display panel such as buttons of elevator seen for the first time has been achieved. After getting into an elevator, the surrounding image of the elevator is taken with a pan-tilt camera, went through image processing and number recognition to locate and recognize the operation display panel. Then the by checking the brightness of the button of the target floor, it is known whether the button is already pushed or not. Then when the light of the button is confirmed to be turned off, the robot will get off the elevator.